Measuring research quality is a topic of growing interest to universities and research institutions. It has become a central issue in relation to the efficient allocation of public resources, which – in many countries and especially in Europe – represent the main component of university funding. Many countries – Australia, France, Italy, the Netherlands, Scandinavian countries, and the UK – have introduced national assessment exercises to gauge the quality of university research. The main criteria for evaluating research performance combine, in various ways, bibliometric indicators (Moed 2005, Nicolaisen 2007) and peer review (Bornmann 2011).

Assessing research quality in Italy

Since no method dominates the other, it is worth studying whether we can combine them in order to assess research quality (Butler 2007, Moed 2007). We can offer initial evidence on this matter from our experience on the panel that evaluated Italian research in Economics, Management, and Statistics during the national assessment exercise (VQR) for the period 2004–2010. The VQR used a sample of 590 journal articles randomly drawn from the population of 5,681 journal articles (out of nearly 12,000 journal and non-journal publications). This sample was evaluated both by bibliometric analysis and by informed peer review. We can then assess whether the two approaches yield similar evaluations.

Like other post-publication research assessments, the VQR involves an informed peer review, in which reviewers know the authors and the publication outlets of the articles. As a result, any correlation between bibliometrics and peer review depends on both an intrinsic common assessment – as if the two evaluations were independent – and the influence exerted on the reviewer’s evaluation by the information about the outlet of the publication. This means, in particular, that our comparison is not an evaluation of the intrinsic validity of bibliometrics or peer review using the other method as a benchmark. Nonetheless, our analysis provides useful information for research policy and management.

If, as we find, the two approaches yield similar results, research policy evaluators can use bibliometrics to predict the outcome of large-scale research assessments based on informed peer review. Since bibliometrics is less costly – it does not mobilise a large number of reviewers – it can provide a managerial tool to monitor research by predicting the outcome of large-scale research assessments. For example, departments can use bibliometrics to predict their position in national assessments based on informed peer review. Because large-scale informed peer review can only be carried out with a lag of some years, bibliometric analysis offers signals for assessing research on a more continuous basis.

Classifying papers and journals

The VQR classified papers in four categories: A, B, C, and D. The criterion given by the VQR is that papers in category A should be in the top 20% of world papers in terms of quality, papers in category B in the next 20%, papers in category C in the following 10%, while papers in category D fall in the bottom 50%. The research assessment considered all the journals in which Italian academics in Economics, Management, or Statistics published at least one paper, and classified these journals as A, B, C, or D according to whether they correspond, respectively, to the top 20%, 20–40%, 40–50%, or bottom 50% of the journals in terms of bibliometric impact. The bibliometric impact of the journal was computed using a combination of the ISI five-year impact factor (IF5) and the Article Influence Score (AIS). For non-ISI journals, the impact factor was imputed from estimation of the correlation between IF5 and AIS and the Google Scholar index for the journal.1 Similarly, the informed peer review process asked two reviewers to classify the article as A, B, C, or D using the same criterion – papers in the top 20%, 20-40%, 40-50% or bottom 50%. When the two reviewers disagreed on the class of the article, they discussed (anonymously) between them and one of the members of the panel to reach a consensus class. Thus, ultimately, the 590 articles evaluated by both methods obtained two scores – one from the bibliometric evaluation and one from the informed peer review.

Comparing informed peer review and bibliometric analysis

We label the distributions of informed peer-review and bibliometric scores as F and P. In comparing them, two criteria may be considered. The first one is the degree of agreement between F and P, that is, whether F and P tend to agree on the same score. The second one is the presence of systematic difference between F and P, measured by the average score difference between F and P. The two distributions may exhibit the same average but radical disagreements, with reviewers systematically assigning to category D articles assigned to category A by bibliometric analysis and vice versa. Likewise, if the two distributions are similar, one may exhibit a higher average.

We measured the level of agreement between F and P using Cohen’s kappa, while systematic differences between sample means can be detected using a standard t-test for paired samples. We use the numerical scores 1, 0.8, 0.5, and 0, assigned by the VQR to the classes A–D, to compute the standard linear weights for the kappa statistics and to compute numerical averages.

Table 1 below reports the kappa statistic for the entire sample and by sub-area. The kappa statistic is scaled to be zero when the level of agreement is what one would expect to observe by pure chance, and to be one when there is perfect agreement. For robustness we also show the statistic computed using standard linear weights (1, 0.67, 0.33, and 0). Overall, kappa is equal to 0.54 and statistically different from zero at the 1% level. For Economics, Management, and Statistics, the value of kappa is close to the overall value for the sample, while History has a lower kappa value (0.29). For each sub-area, kappa is statistically different from zero at the 1% level.

Table 1. Levels of agreement between informed peer review and bibliometric analysis

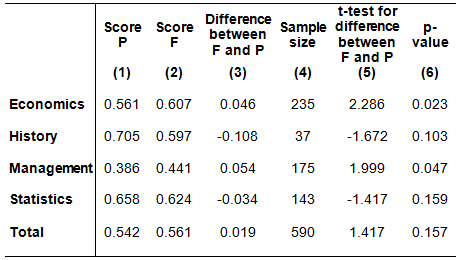

Table 2 reports the average scores resulting from the F and P evaluations. The first two columns report the average scores of the informed peer review (Score P) and bibliometric evaluation (Score F). The third column shows the difference between F and P scores, while the fifth column shows the associated paired t-statistic. Overall, the difference is positive (0.019) and not statistically different from zero at conventional levels (the p-value is 0.157). However, there are differences across sub-areas. For Economics and Management, the difference is positive (0.046 and 0.054, respectively) and statistically different from zero at the 5% level (but not the 1% level). For Statistics and History, the difference is instead negative (-0.108 and -0.034, respectively) but not statistically different from zero.

Table 2. Differences in average scores between informed peer review and bibliometric analysis

To conclude, we find that informed peer review and bibliometric analysis produce similar evaluations. This suggests that the agencies that run research assessments should feel confident about using bibliometric analysis, at least in the disciplines we studied. Of course, we are not recommending its exclusive use, but its use as a less costly monitoring tool in between periodic large-scale assessments.

References

Bertocchi, G, A Gambardella, T Jappelli, C A Nappi, and F Peracchi (2013), “Bibliometric Evaluation vs. Informed Peer Review: Evidence from Italy”, CEPR Discussion Paper 9724.

Bornmann, L (2011), “Scientific peer review”, Annual Review of Information Science and Technology, 45: 199–245.

Butler, L (2007), “Assessing university research: A plea for a balanced approach”, Science and Public Policy, 34: 565–574.

Moed, H F (2007), “The future of research evaluation rests with an intelligent combination of advanced metrics and transparent peer review”, Science and Public Policy, 34: 575–583.

Moed, H F (2005), Citation Analysis in Research Evaluation, Dordrecht: Springer.

Nicolaisen, J (2007), “Citation analysis”, Annual Review of Information Science and Technology, 41: 609–641.

Footnote

1 Individual articles with more than five citations per year could be classified into a higher class than that of the journal in which it was published. Details of the classification of journals into the classes can be found in Bertocchi et al. (2013).