Countries around the world often react strongly and enact broad policy changes in response to how their students perform on standardised tests. For example, after the results of the Programme for International Student Assessment (PISA) were announced in 2009, US Secretary of Education Arne Duncan commented that “I know sceptics will want to argue with the results, but we consider them to be accurate and reliable, and we have to see them as a challenge to get better…We can quibble, or we can face the brutal truth that we're being out-educated" (Dillon 2010). Similarly, as Grek (2009) notes, “In response to the PISA findings, German education authorities organised a conference of ministers in 2002 and proposed reforms of an urgent nature.”

The policy responses to PISA rankings almost always focus on the teaching of mathematics, reading, and science – the subjects that the PISA tests are designed to measure. But like all standardised tests, PISA scores capture something else – how hard students are trying on the test.

Each of the authors of this column belongs to one of three research teams that have examined how much effort students put into taking the PISA. Using different approaches, we all find evidence that many students who take the PISA do not try as hard as they can, and the level of effort varies widely across countries.

It is not surprising that some students put little energy into PISA tests. The test is low-stakes for the students; in fact, they never even see their individual results. We each conclude that the test measures not just ability but a combination of ability and motivation to do well on the test, that this combination varies widely across countries, and that understanding precisely what the test measures has vital policy implications.

In two recent papers, we measure effort by looking at a student’s ability to maintain focus during the entire test (Borgonovi and Biecek 2016, Zamarro et al. 2016).[1] We calculate how the probability that a student answers a given question correctly varies with where it falls on the test. Question difficulty is held constant by exploiting the fact that students are randomly given different test booklets, which vary the order the questions are presented.

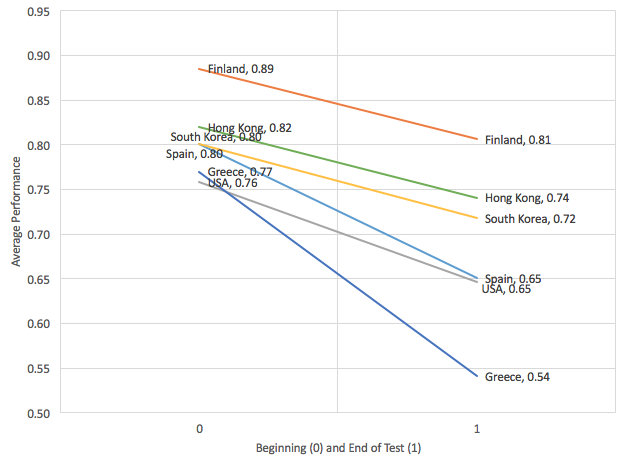

In both studies, we find that all countries show some decline in performance on later portions of the test. Students on average are 13.7 percentage points less likely to answer a question correctly if it appears in the last ten items on the test, rather than the first ten items on the test. However, the rate of decline varies substantially by country and is slower for strong performers on the PISA. Figure 1, from Zamarro et al. (2016), displays the estimated probability of answering questions correctly at the beginning and at the end of the 2009 PISA test for a selected group of countries. Strong PISA performers such as Finland and Hong Kong show a smaller decline in the probability of a correct answer than worse performers such as the US and Spain. As a whole, we find that between 32% and 38% of the cross-country variation in PISA scores can be explained by different levels of student effort across countries.

Figure 1 The estimated decline in performance during the PISA test, by country

Note: Estimates above were obtained using random coefficient regression estimates by country, including a random constant and slope. The graph shows estimated performance at the beginning and at the end of the test.

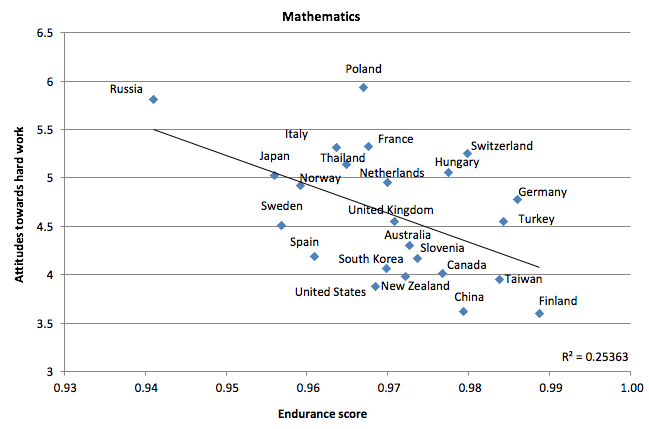

Additionally, performance over the course of the test appears correlated with attitudes towards hard work. In Borgonovi and Biecek (2016), we measure effort by calculating an endurance score for each country which is a function of the difference in probabilities that students answer questions correctly if they appear earlier or later on the test. Figure 2 shows that countries who value hard work less also show less endurance on the 2012 PISA in mathematics.

Figure 2 The relationship between societal attitudes towards hard work and endurance

Note: Attitudes towards hard work 1-10 scale; 1 = “In the long run, hard work usually brings a better life.” 10 = “Hard work doesn’t generally bring success – it’s more a matter of luck and connections.”

One challenge these studies face is that declining performance may partially reflect lower ability to work quickly or maintain focus, rather than lower levels of motivation to do so. Accordingly, these results could just as easily be explained by differential ability distributions across countries. To attempt to resolve this issue, in Gneezy et al. (2017), we conduct a field experiment in three high schools in Shanghai, China and two high schools in the US.[2] The schools represent different levels of performance within each country, allowing us to sample students throughout their respective distributions.

Students take a 25-question mathematics test that is made up of questions that were used in the PISA in the past. Students are randomised into either a treatment group where subjects are given an envelope with $25 in cash but are told that $1 will be taken away for each incorrect answer, or a control group who are not paid. They learn about the incentives just before taking the test, so any impact on performance can only operate through increased effort on the test itself rather than through, for example, better preparation or more studying.

Shanghai ranked first in all academic categories on the 2012 PISA, and in the research discussed above, Shanghai students present high levels of motivation during the PISA test, while students in the US do not. We thus expect US students to improve their performance in response to financial incentives, since they provide extrinsic motivation which can substitute for low intrinsic motivation. Shanghai students are not expected to improve since they are already at or near their effort frontier.

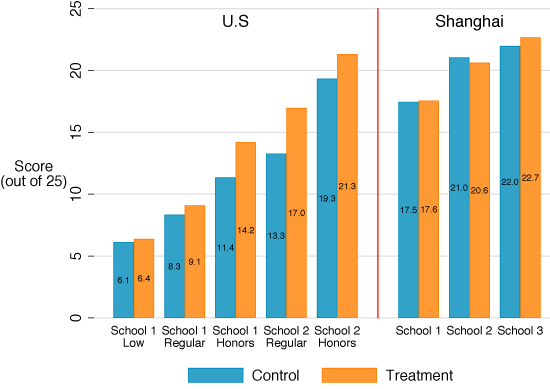

The results are consistent with this hypothesis – students in Shanghai do just as well whether they are paid or not, while students in the US improve dramatically in response to the financial incentives. Figure 3 displays the results separately for each school and track (low, regular, and honours students in the US).

Figure 3 Effect of financial incentives on test scores in the US and Shanghai

In Shanghai, each school experiences little to no change in performance in response to the financial incentives. In the US, however, there are strong effects among all but the lower-ability students. The effects are particularly strong among students in the middle of the ability distribution. For example, honours students at the first school (where only 22% meet minimum state proficiency standards) improve their average score from 11.4 questions answered correctly to 14.2, while regular students at the second school improve their average score from 13.3 to 17. Most of this effect occurs in the latter portion of the test where performance declines in the absence of incentives. When paid, US students attempt more questions in the second half of the test, and are more likely to answer those which they do attempt correctly (see Table 3 in Gneezy et al. 2017).

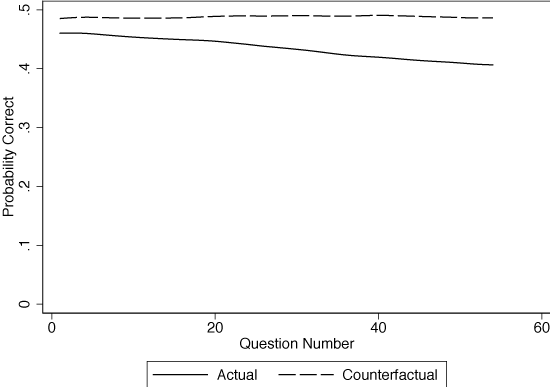

This effect largely solves the problem of declining performance over the course of the test. To show this, Gneezy et al. (2017) simulate the effects of incentives on mathematics PISA performance in the national US sample. Figure 4 reports the proportion correct for the 2009 PISA mathematics questions by question number (students received an average of 16 mathematics questions that appear in positions 1-54). As shown by the solid line, the performance of US students declines over the course of the test. To estimate counterfactual performance under incentives, we first estimate treatment effects by question order in the experimental sample and then map these effects to performance by question number on PISA. The results suggest that with incentives, the probability of answering a question correctly remains constant over the course of the entire test.

Figure 4 Estimated treatment effects on the 2009 PISA by question order, US

Note: Lines are smoothed using local polynomial regression.

What do these results mean for country rankings? Gneezy et al. (2017) find that if the incentives were applied in the US while all other countries remained the same, the US’ ranking on the 2012 mathematics PISA would improve from 36th to 19th.[3] When results in every country are adjusted for differences in student effort, the change is not as stark. Zamarro et al. (2016) do so and find that the US mathematics ranking would improve from only 35th to 32nd.

The difficulties posed by students failing to try as hard as they can on low-stakes tests such as the PISA do not mean that what it does measure is necessarily flawed – far from it. Rather, the test measures a combination of ability and motivation that may be more important than ability alone. In Zamarro et al. (2016), we argue that declining effort over the course of the test may partly capture important non-cognitive skills such as conscientiousness and self-control. In addition, in Borgonovi and Biecek (2016), we note that student-level PISA performance is positively correlated with important lifetime outcomes such as completing high school and college attendance. Much of this correlation is likely driven by the non-cognitive skills that go along with the effort a student is willing to put into a low-stakes test.

Although both academic achievement and student motivation are important for individuals’ long-term outcomes, deficiencies in one may not be solved by policies designed to improve the other. One of the major innovations for education policy of the past decades has been the development of robust metrics to compare students’ reading, mathematics, and science competencies cross-nationally. The next challenge is to develop equally sound methods to separately measure both cognitive and non-cognitive outcomes in a way that is comparable internationally.

References

Balart, P and A Cabrales (2014), “La maratón de PISA: la perseverancia como factor del éxito en una prueba de competencias”, Reflexiones Sobre El Sistema Educativo Español 75.

Borghans, L and T Schils (2015), “The leaning tower of PISA”, Working Paper.

Borgonovi, F and P Biecek (2016), “An international comparison of students’ ability to endure fatigue and maintain motivation during a low-stakes test”, Learning and Individual Differences 49: 128-137.

Dillon, S (2010), “Top test scores from Shanghai stun educators”, The New York Times, 7 December.

Gneezy, U, J A List, J A Livingston, S Sadoff, X Qin and Y Xu (2017), “Measuring success in education: The role of effort on the test itself”, NBER Working Paper No 24004.

Grek, S (2009), “Governing by numbers: the PISA ‘effect’ in Europe”, Journal of Education Policy 24(1): 23-37.

Zamarro, G, C Hitt and I Mendez (2016), “When students don’t care: Reexamining international differences in achievement and non-cognitive skills”, EDRE Working Paper 2016-18.

Endnotes

[1] See also Balart and Calabres (2014) and Borghans and Schils (2015)

[2] In addition to this approach, in Zamarro et al. (2016) we also examine between-country differences in patterns of non-response and careless answering to the background PISA questionnaire that follows the test, and findings mirrored closely those obtained when examining effort exerted during the test session.

[3] Similarly, the data from Borgonovi and Biecek (2016) show that if US performance remained constant from the first cluster of questions to the third, the US ranking based on third cluster performance would improve from 21st to 7th.