The European Banking Authority (EBA), together with the national competent authorities (the Single Supervisory Mechanism, the ECB, and others), regularly conducts stress tests for major banks in the EU.1 The EBA kicks off a stress test with the release of two macroeconomic scenarios: a baseline scenario and an adverse scenario. Following the EBA’s methodology, banks apply these scenarios to their exposures at a fixed date and project losses and capital ratios over a three-year horizon under a static balance sheet assumption. Banks submit their projections to the supervisors, who scrutinise the projections and benchmark them against outcomes of a supervisory challenger model. After results have been agreed upon by banks and regulators, they are made public by the EBA and serve as an input for the supervisory evaluation process that sets bank capital requirements for the following year.2

The European approach to stress testing differs significantly from that of other countries, most prominently the US, where the Federal Reserve projects capital under stress for individual banks following an “industry-wide approach, in which the estimated model parameters are the same for all” banks.3 Our latest paper highlights a key issue with the European approach of letting banks run their own stress test models, providing evidence that some banks were able to significantly lessen the stringency of the 2016 EBA stress test by manipulating the results (Niepmann and Stebunovs 2018).4 Based on our estimations, these banks projected systematically lower credit losses than what their models used in earlier years would have predicted for the same macro scenario and risk exposure. Detecting and counteracting such bank behaviour apparently poses a challenge.

The approach

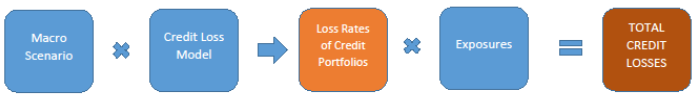

Our analysis is based on methodology proposed in Philippon et al. (2016). The idea is simple – the EBA publishes detailed information on banks’ projected credit loss rates by bank, country, and portfolio, as well as on the scenarios that underlie these projections. Hence, one can use standard regression techniques to estimate a credit loss model that produces bank-specific loss rates as a function of macroeconomic variables (GDP growth, the inflation rate, and the unemployment rate). The model features a common effect of macroeconomic variables on loss rates as well as a bank-specific effect. Once the credit loss model is estimated, one can calculate an estimate of total projected credit losses for each bank for a given scenario by multiplying loss rates with credit exposures and summing the losses (see Figure 1).

Figure 1 The empirical approach

Note: The figure summarises our approach to calculating bank-specific credit losses. The estimated credit loss model is fed with a macroeconomic scenario consisting of GDP growth rates, inflation and unemployment rates for more than 20 countries to project bank-specific loss rates by portfolio. Loss rates are then multiplied by exposures and summed over countries and portfolios to yield each bank’s total projected credit losses.

To reveal systematic model adjustments that banks made, we decompose changes in banks’ projected credit losses between the 2014 and the 2016 stress tests into three components: changes in the EBA scenarios, changes in model parameters, and changes in credit exposures. To this end, we estimate banks’ credit loss models for the 2014 and the 2016 stress tests separately. We calculate hypothetical credit losses for different combinations of the two years’ macro scenarios, credit loss models, and exposures (the elements in blue in Figure 1). Our focus is on the adverse scenarios in both tests.

Evidence for systematic adjustments

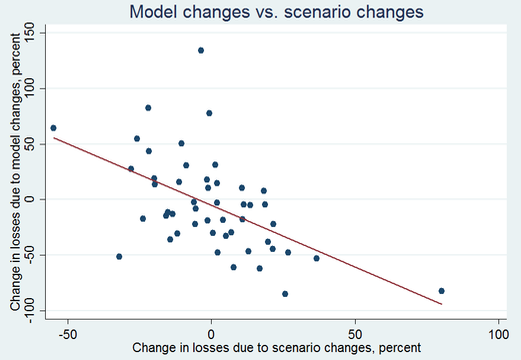

Figure 2 presents evidence for systematic adjustments of credit loss projections in the 2016 stress tests. The figure plots two objects against each other. Changes in our estimates of banks’ projected credit losses between the 2014 and 2016 stress tests that resulted only from changes in the underlying loss models are on the x-axis.5 The y-axis shows changes in our estimates of projected credit losses between the 2014 and 2016 stress tests that stem from changes in the adverse macro scenario and would have resulted if banks had applied the 2014 credit loss models. The chart reveals a strong negative relationship between the two variables. In other words, the banks whose credit losses would have increased more because of scenario changes had they applied the 2014 credit loss models were the banks that had changes in their credit loss models that brought about larger declines in losses. For many banks, these model changes essentially brought the 2016 credit loss projections closer to those from 2014, thus smoothing losses across the stress tests.

In principle, changes to the loss models could reflect changes in the riskiness of banks’ credit portfolios between stress tests, but the relationship shown in Figure 2 survives even when we account for this factor, by controlling for changes in risk-weight densities of the underlying portfolios. (A portfolio’s risk-weight density is defined as the ratio of the portfolio’s risk-weighted exposures divided by unweighted exposures.) Moreover, we find that model changes tended to bring down credit losses more for larger portfolios, where systematic adjustments could have the biggest effect on a bank’s aggregate credit losses.

Figure 2 Evidence for systematic model adjustments to bring down credit losses

Note: The chart shows on the x-axis the percent change in our estimates of a bank’s credit losses between the 2014 and 2016 stress tests that stem from our estimates of changes in the underlying loss model. The percent change in credit losses between the 2014 and 2016 stress tests that resulted from changes in the adverse macro scenario is on the y-axis. Each dot represents a bank that participated in the 2014 and 2016 stress tests. The red line represents the estimated linear relationship.

We estimate that the magnitude of model adjustments was economically significant. Without model adjustments, banks’ aggregate projected credit losses would have been around 28% higher if banks had kept credit loss models unchanged from 2014. This number masks significant heterogeneity across banks. For the ten banks with the largest negative effect of model changes on projected credit losses, the reduction in credit losses was, on average, equivalent to 15% of the banks’ end-2015 CET1 capital. While there were no hurdle rates for banks in 2016, and hence banks could not fail the test, stress tests results would certainly have looked significantly worse without the model manipulation.

What made manipulation possible?

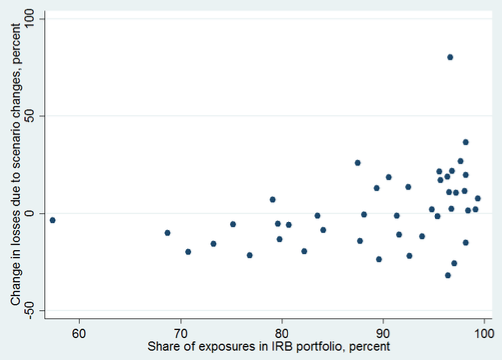

One may immediately ask how such manipulation came about. While we have no definite answer, a couple of clues point us toward two factors that might have made it easier for banks to advantageously adjust their models. First, we show that the banks with the larger incentives to lower credit losses through model changes (those banks that we estimate had larger increases in losses because of scenario changes) were the ones that rely more on the internal-ratings based (IRB) approach (see the top panel of Figure 3). Under the IRB approach, banks build their own models to assess credit risk in their books. The approach likely leaves a lot of room for manoeuvre so that banks can camouflage manipulation amid high complexity of models and exposures.

Figure 3 Estimated changes in credit losses due to scenario and exposure changes plotted against share of IRB exposures and model performance

Note: Both panels have on the y-axis the percent change in our estimates of a bank’s credit losses between the 2014 and 2016 stress tests that resulted from changes in the adverse macro scenario. The left panel plots this variable against a bank’s percent share of exposures subject to the IRB approach in total exposures (as of 2015:Q4). The right panel has a measure of model performance by bank on the x-axis. It was computed by comparing banks’ observed loan loss reserves over gross loans to projections of loan loss rates that follow from feeding the credit loss models, estimated using the 2014 stress test data, with observed macroeconomic variables from 2013 to 2016. Model performance is increasing from left to right on the x-axis (left panel) and is the average simple difference between projected loss rates and our proxies of realised loss rates. Each dot represents a bank that participated in the 2014 and the 2016 stress tests.

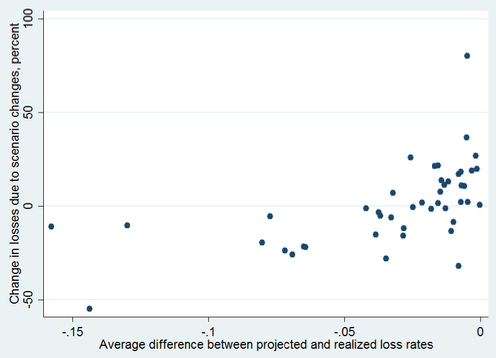

Second, we show that the banks that adjusted their models the most had the better performing 2014 models, meaning their 2014 models were better at predicting the observed annual loan loss reserves as a share of gross loans (our proxy for loan loss rates) from 2013 through 2016 (bottom panel of Figure 3). These banks may have been under less scrutiny from supervisors. Indeed, supervisors appear to have paid more attention to the models of weaker banks as evidenced by Figure 4. It shows a negative relationship between banks’ capital buffers as of end-2015 and improvements in model performance between stress tests – banks with smaller capital buffers tended to have improvements in model performance (positive values on the y-axis). Moreover, model performance overall remained roughly the same over time; so even though weaker banks’ models tended to improve, stronger banks’ models tended to deteriorate.

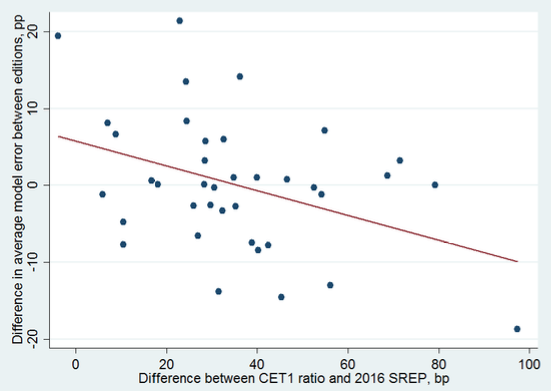

Figure 4 Improvements in predictive power of banks’ models related to their capital buffers

Note: This chart plots a bank’s capital buffer in basis points, computed as the difference between its CET1 capital ratio as of 2015:Q4 and its equivalent 2016 SREP requirement, against a measure of the change in model performance between the 2014 and 2016 stress tests. Model performance was measured by comparing banks’ observed loan loss rates to projections that follow from feeding the credit loss models estimated for 2014 and, separately, for 2016 with observed macroeconomic variables from 2013 to 2016. Improvements in model performance are associated with positive values on the y-axis and are in percentage points. Each dot represents a bank that participated in the 2014 and 2016 stress tests. The red line represents the estimated linear relationship.

What are banks’ incentives to improve stress test performance?

Our results are in line with recent evidence that banks will use the flexibility they have to improve regulatory capital ratios. Behn et al. (2016) find that the introduction of the IRB approach in Germany led to lower projected loss rates and applied risk weights for banks that adopted the approach. Similarly, Plosser and Santos (2014) document that low-capital banks in the US bias downward their IRB risk estimates, consistent with an effort to improve their regulatory capital ratios.6 In the stress test context, higher projected capital ratios have real consequences because supervisors take the results of the stress test into account when setting bank-specific capital requirements. And lower capital requirements imply a greater potential for capital distribution to shareholders (and higher equity prices) and, at the same time, for bank failures (and higher CDS spreads).

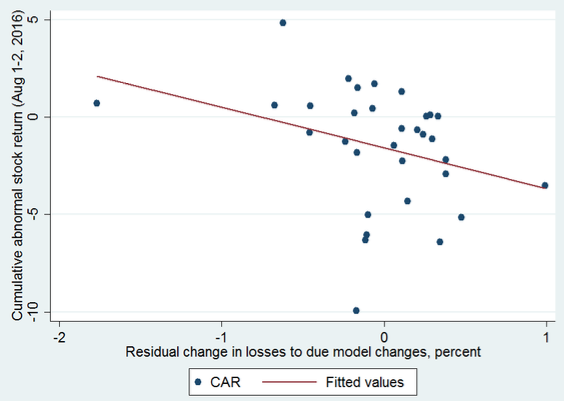

Indeed, equity markets responded systematically to the release of the 2016 stress test results, with stock prices increasing more for banks that appear to have used a 2016 credit loss model that produced rosier projections than their 2014 model. Figure 5 documents a negative relationship between abnormal cumulative stock returns on the first two days after the release of the 2016 stress test results (1-2 August 2016) and our estimates of banks’ changes in credit losses that resulted from model changes. That is, banks that saw a decline in credit losses because of changes to their loss models had higher cumulative abnormal returns. Analysing the response of CDS spreads, we find that the cost of credit protection increased more for banks that had a larger decline in projected credit losses relative to what the 2014 results would have implied. This effect was stronger for banks with lower capital buffers.

These results together suggest that investors did not take the surprise reduction in credit losses due to model changes as mainly reflecting lower credit risk (this would have implied lower CDS spreads and probably lower stock prices). Instead, they anticipated lower capital requirements going forward resulting in more room for capital distributions, lower dilution risks for stock holders, and lower capital relative to risk implying a higher chance of losses for providers of credit protection.

Figure 5 Stock price responses to changes in credit losses stemming from model changes

Note: This chart plots the percent change in a bank’s credit losses between the 2014 and 2016 stress tests that stem from changes in the underlying loss model against its cumulative abnormal stock market return (the sum of the adjusted log change in the stock price) on the first two days after the release of the stress test results. Each dot represents a bank that participated in the 2014 and the 2016 stress tests. The red line represents the estimated linear relationship.

Lessons for designing stress tests

Banks have incentives to produce the best stress test results possible and, as we show, will exploit the flexibility they have to improve their numbers. This flexibility is substantial for European banks and raises issues about the stress tests’ ability to assess banks’ capital adequacy and resilience. The US framework where supervisors run stress tests and commit to applying the same parameters to all banks probably leaves much less room for manipulation because models cannot be tailored to individual banks. This approach might be favourable if one wants to make sure that banks do not model their stress away.

References

Begley, T A, A Purnanandam, and K Zheng (2017), “The Strategic Underreporting of Bank Risk,” The Review of Financial Studies 30(10): 3376–3415.

Behn, M W, R Haselmann, and V Vig (2016), “The limits of modelbased regulation,” ECB Working Paper No. 1928.

Mariathasan, M and O Merrouche (2014), “The manipulation of basel risk-weights,” Journal of Financial Intermediation 23(3): 300 – 321.

Niepmann, F and V Stebunovs (2018), “Modeling Your Stress Away”, CEPR Discussion Paper 12624 (also published as International Finance Discussion Papers No 1232, Board of Governors of the Federal Reserve System).

Philippon, T, P Pessarossi, and B Camara (2017), “Backtesting European Stress Tests,” NBER Working Paper 23083.

Plosser, M and J Santos (2014), “Banks’ incentives and the quality of internal risk models,” Staff Reports 704, Federal Reserve Bank of New York.

Steffen, S (2014), “Robustness, validity, and significance of the ECB’s asset quality review and stress test exercise,” SAFE White Paper Series 23, Goethe University Frankfurt.

Steffen, Sand V Acharya (2014), “Making Sense of the Comprehensive Assessment,” SAFE Policy Letter No. 32, Goethe University Frankfurt.

Vallascas, F and J Hagendorff (2013), “The Risk Sensitivity of Capital Requirements: Evidence from an International Sample of Large Banks,” Review of Finance 17(6): 1947–1988.

Endnotes

[1] Stress tests were conducted in 2011, 2014, and 2016 and the latest round is ongoing.

[2] In the euro area, this process is called the Supervisory Review and Evaluation Process (SREP).

[3] See page 13 of “Dodd-Frank Act Stress Tests 2017: Supervisory Stress Test Methodology and Results.”

[4] The EBA stress tests have been criticized by Steffen (2014) and Steffen and Acharya (2014), for example. They find that market-based metrics result in substantially higher estimates of capital shortfalls compared with the 2011 EBA stress tests.

[5] Numbers are calculated using the 2016 adverse macro scenario and exposures as of 2015:Q4. The figure shows log changes.

[6] See also Begley et al. (2017), Mariathasan and Merouche (2014), and Vallascas and Hagendorff (2013).