Policymakers, researchers, and businesses have turned to behavioural economics and psychology for additional levers to adjust behaviour, looking for successful ‘nudges’ (Thaler and Sunstein 2009). An example of this trend is the recent formation of Behavioral Science Units within the US [SH1] Government, and increasingly in governments worldwide. The mission of such units is to “translate findings and methods from the social and behavioral sciences into improvements in Federal policies and programs.”

But what guides the choice of behavioural interventions? A criticism of the behavioural and psychological literature is that, if anything, there are too many potential levers to change behaviour, without a clear indication of their relative effectiveness. Different dependent variables and dissimilar participant samples can make direct comparisons of effect sizes across various studies difficult. Given the disparate evidence, it is not clear whether even behavioural experts would be able to determine the relative effectiveness of various possible interventions in a particular setting.

We design and run a large experiment that allows us to compare the relative effectiveness of multiple treatments within one setting (DellaVigna and D Pope 2016a). We focus on a real-effort task with treatments including monetary incentives and non-monetary behavioural motivators. The key difference between the 18 treatments, which are motivated by leading behavioural models, is the phrasing of just one paragraph in the instructions.

In a second contribution, we also elicit forecasts from academic experts on the effectiveness of the treatments (DellaVigna and Pope 2016b). We argue that methodologically the collection of forecasts in advance of a study is a valuable step. It captures the beliefs of the research community on a topic, and also indicates in which direction, and how decisively, the results diverge from such beliefs. In our context, the forecasts are of interest because the experiment covers a range of models in behavioural economics, applied to a real-effort setting.

Turning to the details, we recruit 9,800 participants on Amazon Mechanical Turk (MTurk) - an online platform that allows researchers to post small tasks. MTurk has become very popular for experimental research in marketing and psychology (Paolacci and Chandler 2014) and is increasingly used in economics (e.g. Kuziemko et al. 2015).

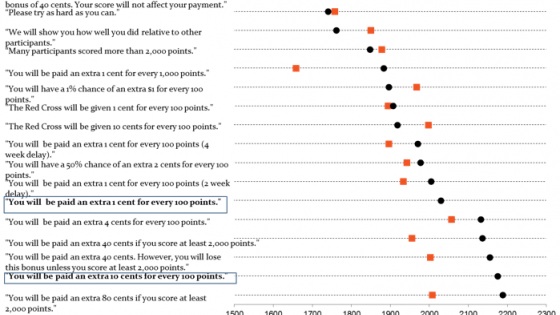

The task for the subjects is to alternately press the "a" and "b" buttons on their keyboards as quickly as possible for ten minutes. The 18 treatments attempt to motivate participant effort using i) standard incentives; ii) non-monetary psychological inducements; and iii) behavioural factors such as present-bias, reference dependence, and social preferences. Figure 1 presents, in somewhat shortened format, the phrasing of the 18 treatments and the average effort (number of button presses) per treatment.

Figure 1 Button presses by treatment (from least to most effective) and confidence intervals

Notes: Figure 1 presents the average score and confidence interval for each of 18 treatments in a real-effort task on Amazon Turk. Participants in the task earn a point by for each alternating a-b button press within a 10-minute period. The 18 treatments differ only in one paragraph presenting the treatments, the key sentence of which is reproduced in the first row. Each treatment has about 550 participants.

Figure 1 shows the three main findings about performance. First, monetary incentives have a strong motivating effect – compared to a treatment with no piece rate, performance is 33% higher with a 1-cent piece rate, and another 7% higher with a 10-cent piece rate. A simple model of costly effort estimated on these three benchmark treatments predicts performance very well not only in a fourth treatment with an intermediate (4-cent) piece rate, but also in a treatment with a very low (0.1-cent) piece rate that could be expected to crowd out motivation. Instead, effort in this very-low-pay treatment is 24% higher than with no piece rate.

Second, non-monetary psychological inducements are moderately effective in motivating the workers. The three treatments increase effort compared to the no-pay benchmark by 15 to 21% - a sizeable improvement, especially given that it is achieved at no additional monetary cost. At the same time, these treatments are less effective than any of the treatments with monetary incentives, including the one with very low pay. Among the three interventions, a Cialdini-type comparison (Cialdini et al. 2007) is the most effective.

Third, the results using behavioural factors are generally consistent with behavioural models of social preferences, time preferences, and reference dependence, but with important nuances. Treatments with a charitable giving component motivate workers in a way consistent with warm glow but not pure altruism – the effect on effort is the same whether the charity earns a piece-rate return of 1 cent or 10 cents.

Turning to time preferences, treatments with payments delayed by two or four weeks induce less effort than treatments with immediate pay, for a given piece rate. However, the decay in effort does not display a present-bias pattern and decays instead exponentially (although the confidence intervals of the estimates do not rule out present bias).

Finally, we consider five treatments designed to provide evidence on two key components of reference-dependent models (Kahneman and Tversky 1979) – loss aversion, and overweighting of small probabilities. Using a claw-back design (Hossain and List 2012), we find evidence consistent with a larger response to an incentive framed as a loss than as a gain. Probabilistic incentives as in Loewenstein et al. (2007) instead induce less effort than a deterministic incentive with the same expected value. This result is not consistent with overweighting of small probabilities.

Next, we measure the beliefs of academic experts about the effectiveness of the treatments. This allows us to capture where the research community stands with respect to (an application of) standard behavioural models.

We surveyed researchers in behavioural economics, experimental economics, and psychology, as well as some non-behavioural economists. We provided the experts with the results of the three benchmark treatments with piece-rate variation to help them calibrate how responsive participant effort was to different levels of motivation. We then asked them to forecast the effort in the other 15 treatment conditions. Out of 314 experts contacted, 208 experts provided a complete set of forecasts. Our initial, broad selection of experts and the 66% rate ensure a good coverage of behavioural experts.

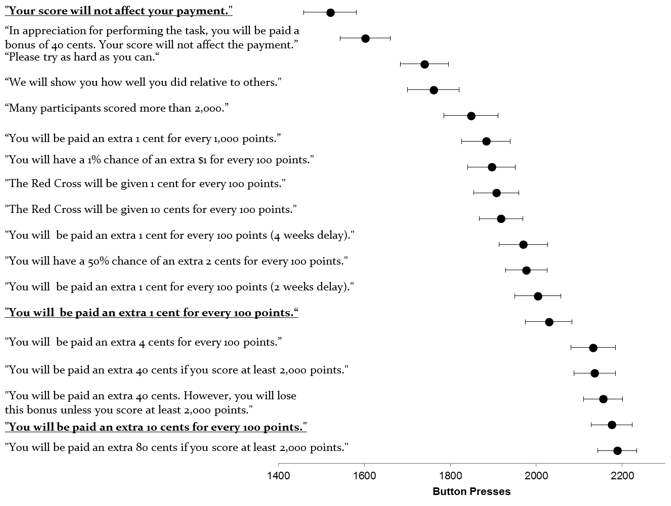

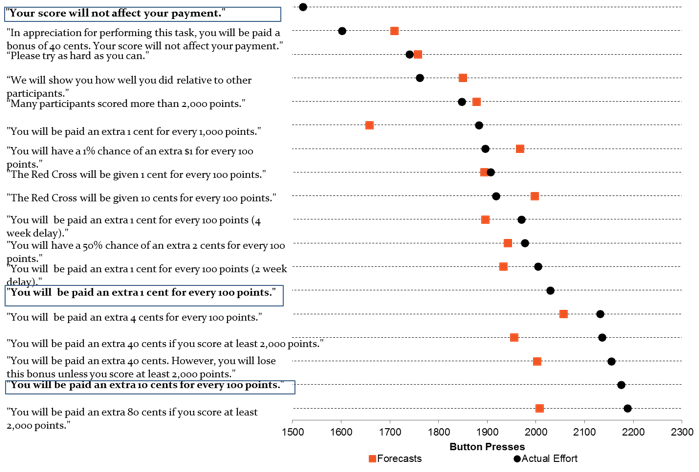

Figure 2 Average button presses by treatment and average expert forecasts

Note: The black circles in Figure 2 present the average score for each of 18 treatments in a real-effort task on Amazon Turk. Participants in the task earn a point for each alternating a-b button press within a 10-minute period. The 18 treatments differ only in one paragraph presenting the treatments, the key sentence of which is reproduced in the first row. Each treatment has about 550 participants. The orange squares represent the average forecast from the sample of 208 experts who provided forecasts for the treatments. The three treatments in bold are benchmarks; the average score in the three benchmarks was revealed to the experts and thus there is no forecast.

Figure 2 shows the average expert forecasts. The experts anticipate correctly several results, and in particular the effectiveness of the psychological inducements. Strikingly, the average forecast ranks in the exact order the six treatments without private performance incentives: two social comparison treatments, a task significance treatment, the gift exchange treatment, and two charitable giving treatments.

At the same time, the experts fail to correctly predict other features. The largest deviation between the average expert forecast and the actual results is for the very-low-pay treatment, where experts on average anticipate a 12% crowd out, while the evidence indicates no crowd out. In addition, while the experts predict very well the average effort in the charitable giving treatments, they expect higher effort when the charity earns a higher return, counterfactually. The experts also overestimate the effectiveness of the gift exchange treatment by 7%.

Regarding reference dependence, the experts expect the loss framing to have about the same effect as a gain framing with twice the incentives, consistent with the Tversky and Kahneman (1991) calibration, and largely in line with evidence from MTurkers’ effort. Turning to the probability weighting results, the experts on average overestimate the effect of the treatments with probabilistic piece rates.

We also document that, perhaps surprisingly, the forecasts do not materially differ depending on the main field of the expert – on average, behavioural economists, psychologists, laboratory experimenters, and non-behavioural economists appear to share similar priors.

In the final part of our paper, we exploit the tight link between the experimental design and the model to estimate key parameters for models of social preferences, time preferences, and reference dependence. We also use the expert forecasts to estimate expert beliefs about these same parameters.

We explore complementary findings on expert forecasts in a companion study (DellaVigna and Pope 2016b). We present different measures of expert accuracy, comparing individual forecasts with the average forecast. We also consider determinants of expert accuracy and compare the predictions of academic experts to those of other groups of forecasters, including PhDs, undergraduates, MBAs and MTurkers. Finally, we examine beliefs of experts about their own expertise and the expertise of others.

Our findings relate to a vast literature on behavioural motivators. While we cannot cite all related papers, our treatments relate to the literature on pro-social motivation (Andreoni 1989, 1990), crowding-out (Gneezy and Rustichini 2000), present-bias (Laibson 1997, O'Donoghue and Rabin 1999), and reference dependence (Kahneman and Tversky 1979, Koszegi and Rabin 2006), among others. Several of our treatments have parallels in the literature, such as Imas (2014) and Tonin and Vlassopoulos (2015) on real effort and charitable giving. Two main features set our study apart. First, we consider all the above motivators in one common environment. Second, we compare the effectiveness of behavioural interventions with the expectations.

The emphasis on expert forecasts ties this paper to a small literature on forecasts of research results. Coffman and Niehaus (2014) include a survey of seven experts on persuasion, while Sanders et al. (2015) ask 25 faculty and students from two universities questions on the results of select experiments run by the UK Nudge Unit. Groh et al. (2015) elicit forecasts on the effect of an RCT from audiences of four academic presentations. These complementary efforts suggest the need for a more systematic collection of expert beliefs about research findings.

References

Thaler, R H, and C R Sunstein (2009), Nudge: Improving Decisions About Health, Wealth, and Happiness, New York, Penguin Group

Andreoni, J (1989), "Giving with Impure Altruism: Applications to Charity and Ricardian Equivalence", Journal of Political Economy, 97 (6), 1447-1458

Andreoni, J (1990), “Impure Altruism and Donations to Public Goods: A Theory of Warm-Glow Giving”, The Economic Journal, 100(401), 464-477

Cialdini, R B, Schultz, P W, J M Nolan, N J Goldstein, and V Griskevicius (2007), "The Constructive, Destructive, and Reconstructive Power of Social Norms", Psychological Science 18(5).

Coffman, L, and P Niehaus (2014), "Pathways of Persuasion", Working paper

DellaVigna, S, and D Pope (2016a), “What Motivates Effort? Evidence and Expert Forecasts”, NBER Working Paper No. 22193.

DellaVigna, S, and D Pope (2016b), "Predicting Experimental Results: Who Knows What?", Working paper

Gneezy, U, and A Rustichini (2000), "Pay Enough or Don't Pay at All", Quarterly Journal of Economics 115(3): 791-810

Groh, M, N Krishnan, D McKenzie, and T Vishwanath (2015), "The Impact of Soft Skill Training on Female Youth Employment: Evidence from a Randomized Experiment in Jordan", Working paper

Hossain, T, and J A List (2012), "The Behaviouralist Visits the Factory: Increasing Productivity Using Simple Framing Manipulations", Management Science 58(12): 2151-2167

Imas, A (2014), "Working for the ‘warm glow’: On the benefits and limits of prosocial incentives", Journal of Public Economics 114, 14-18

Kahneman, D, and A Tversky (1979), "Prospect Theory: An Analysis of Decision Under Risk", Econometrica 47(2): 263-292

Koszegi, B, and M Rabin (2006), "A Model of Reference-Dependent Preferences", Quarterly Journal of Economics 121(4): 1133-1165

Kuziemko, I, M I Norton, E Saez, and S Stantcheva (2015), "How Elastic Are Preferences for Redistribution? Evidence from Randomized Survey Experiments", American Economic Review 105(4): 1478-1508

Laibson, D (1997), "Golden Eggs and Hyperbolic Discounting", Quarterly Journal of Economics 112(2): 443-477

Loewenstein, G, T Brennan, and K G Volpp (2007), "Asymmetric Paternalism to Improve Health Behaviours", Journal of the American Medical Association 298(20): 2415-2417

O'Donoghue, E, and M Rabin (1999), "Doing It Now or Later", American Economic Review 89(1): 103-124

Paolacci, G, and J Chandler (2014), "Inside the Turk: Understanding Mechanical Turk as a Participant Pool", Current Directions in Psychological Science 23(3): 184-188

Sanders, M, F Mitchell, and A N Chonaire (2015), "Just Common Sense? How well do experts and lay-people do at predicting the findings of Behavioural Science Experiments", Working paper

Thaler, R H, and C R Sunstein (2009), Nudge: Improving Decisions About Health, Wealth, and Happiness, New York: Penguin Group

Tonin, M, and M Vlassopoulos (2015), "Corporate Philanthropy and Productivity: Evidence from an Online Real Effort Experiment", Management Science 61 (8): 1795-1811

Tversky, A, and D Kahneman (1992), "Advances in prospect theory: Cumulative representation of uncertainty", Journal of Risk and Uncertainty 5(4): 297-323