The extent of monopsony – employer market power – in the labour market is an important economic question. Allowing for the possibility that firms have some ability to set wages has wide-ranging implications – from gaining a better understanding of wage differentials across similar workers to explaining how minimum wages can raise wages with limited impact on employment (Card and Krueger 1995, Manning 2003). While there is growing interest in the potential importance of monopsony power (e.g. Azar et al. 2018, Benmelech et al. 2018), we can benefit from a clear proof that labour market power exists, especially outside of ‘company towns’ or specific, highly concentrated markets.

Online labour markets represent a particularly compelling arena for such a demonstration. In many ways, they appear as frictionless settings with a large number of participants, where information about pay is easily observed and costs of switching across tasks seem to be low. While a number of prior studies have suggested that employers in online labour markets have a surprising degree of market power, they have stopped short of quantifying it. In our recent paper, we rigorously estimate the degree of requester market power in a widely used online labour market – Amazon Mechanical Turk, or MTurk (Dube et al. 2018). This is the most popular online micro-task platform, allowing requesters (employers) to post jobs which workers can complete for.

We provide evidence on labour market power by measuring how sensitive workers’ willingness to work is to the reward offered. The labour supply elasticity facing a firm is a standard measure of wage-setting (monopsony) power. For example, if lowering wages by 10% leads to a 1% reduction in the workforce, this represents an elasticity of 0.1. While there is a large literature on the labour supply elasticity to the market, the evidence on the elasticity facing individual employers is much more limited.

We take two different approaches to measuring this: the first is observational, while the second uses randomised experiments. For the observational approach, we use data from a near-universe of tasks scraped from MTurk and see how the offered reward affected the time it took to fill a particular task.

Of course, there are many possible ways in which high- and low-reward tasks may differ, and it is important to control for these differences. The scraped data have a tremendous amount of information about the tasks, from the textual description as well as the meta-data. We use newly developed tools from machine learning to control for the relevant characteristics of these tasks, and estimate how similar jobs with different wages get filled at different rates. In particular, we isolate plausibly exogenous differences in rewards using the double-machine-learning method (Chernozhukov et al. 2017), which controls for a highly predictive function of observables generated from a rich array of textual and numeric metadata. The estimator works by using machine learning to train a prediction algorithm for both the independent variable (log rewards) as well as the dependent variable (log duration of task posting), separately using a large set of covariates in one split of the data. This prediction is then applied to the other split of the data to predict both the dependent and independent variable. Then the difference between the prediction and the true value (the residual) of the dependent variable is regressed on the residual of the independent variable, in the spirit of the Frisch-Waugh-Lovell Theorem. This is then repeated, exchanging the splits; so that the data used for prediction is kept separate from the data used for estimating the causal effect.

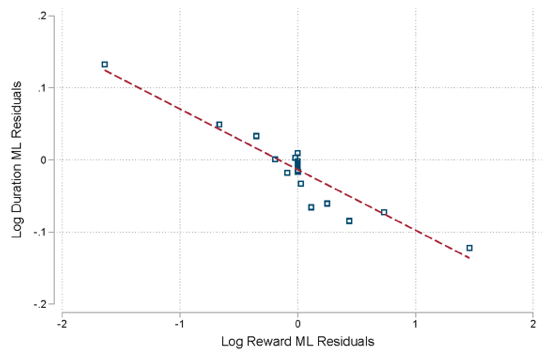

We implement this estimator using Random Forests (Breiman 2001) with two splits, each with 80% training and 20% test. Importantly, we obtain high out-of-sample predictive accuracy on both rewards and duration, which limits the scope for remaining omitted variables bias. Figure 1 shows the binned scatterplot of the residuals, and suggests the log-log specification is capturing a good deal of the variation we are using to estimate the labour supply elasticity to the requester.

Figure 1 Binned scatterplot of ML-adjusted residuals

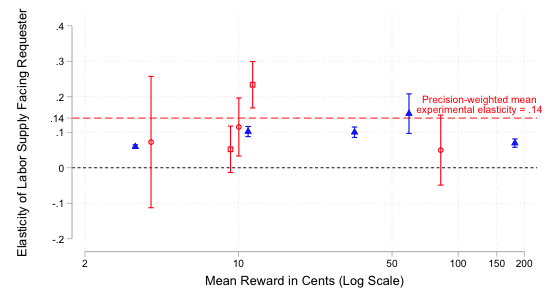

Despite leveraging observational data as best we can, concerns about omitted variables may remain. We also present results using experimental variation, and analyse data from five previous experiments that randomised the wages of MTurk subjects. This randomised reward-setting provides ‘gold-standard’ evidence on market power, as we can see how MTurk workers responded to different wages. While the previous experimenters had randomly varied the wage, none except Dube et al. (2017) recognised that they had estimated a task-specific labour supply curve, nor noticed that this reflected monopsony power on the MTurk marketplace. We empirically estimate both a ‘recruitment’ elasticity (comparable to what is recovered from the observational data) where workers see a reward and associated task as part of their normal browsing for jobs, and a ‘retention’ elasticity where workers, having already accepted a task, are given an opportunity to perform additional work for a randomised bonus payment.

Together, these very different pieces of evidence provide a remarkably consistent estimate of the labour supply elasticity facing MTurk requesters. As shown in Figure 2, the precision-weighted average experimental requester’s labour supply elasticity is 0.13 – this means that if a requester paid a 10% lower reward, they’d only lose around 1% of workers willing to perform the task. This suggests a very high degree of market power. The experimental estimates are quite close to those produced using the machine-learning based approach using observational data, which also suggest around 1% reduction in the willing workforce from a 10% lower wage. To put this into perspective, if requesters are fully exploiting their market power, our evidence implies that they are paying workers less than 20% of the value added. This suggests that much of the surplus created by this online labour market platform is captured by employers.

Figure 2 Experimental and observational estimates of monopsony power in MTurk

In his review of Manning’s 2003 book Monopsony in Motion, Peter Kuhn made the following conjecture: “[U]pward-sloping labour supply curves—whether induced by search or other factors—seem unlikely to me to be a serious constraint for most firms. This seems even more likely to be the case in the near future, as ... information technology has the potential to reduce search frictions.” (Kuhn 2004: 376). The emergence of online platforms represents an idealised environment where search costs are presumably very low. Despite this, we find a highly robust and surprisingly high degree of market power even in this large and diverse spot labour market.

Concluding remarks

Why might a platform like MTurk allow so much monopsony power? One possibility is that requesters are on the more elastic side of the crowdsourcing market. This would make it profitable for Amazon to provide a platform that enables the bulk of the surplus to be captured by employers rather than workers.

If online markets for data labour exhibit such extreme market power, it is perhaps unsurprising that many companies effectively pay zero wages for data they collect from users of their platforms. Further, another consequence of online monopsony is inefficiency – machine learning or social science researchers that collect data from MTurk may have inefficiently small samples or take too long to obtain target sample sizes. Hence there may be overinvestment in data processing relative to data collection.

MTurk workers and their advocates have long noted the asymmetry in market structure among themselves. Both efficiency and equality concerns have led to the rise of competing, ‘worker-friendly’ platforms such as Stanford’s Dynamo, and mechanisms for sharing information about good and bad requesters such as Turkopticon and online discussion fora. Scientific funders such as Russell Sage have instituted minimum wages for crowd-sourced work. Our results suggest that these sentiments and policies may have an economic justification. Our research suggests that the recent literature documenting pervasive monopsony also extends to online labour markets. Moreover, the hope that information technology will necessarily reduce search frictions and monopsony power in the labour market may be misplaced.

References

Azar, J A, I Marinescu, M I Steinbaum and B Taska (2018), “Concentration in US labor markets: Evidence from online vacancy data”, NBER Working paper w24395.

Benmelech, E, N Bergman and H Kim (2018), “Strong employers and weak employees: How does employer concentration affect wages?”, NBER Working paper w24307.

Breiman, L (2001),“Random forests,” Machine Learning 45(1): 5–32.

Card, D and A Krueger (1995), Myth and measurement, Princeton University Press.

Chernozhukov, V, D Chetverikov, M Demirer, E Duflo, C Hansen, W Newey and J Robins (2018), “Double/debiased machine learning for treatment and structural parameters”, The Econometrics Journal 21(1): C1–C68.

Dube, A, S Naidu and A Manning (2017), “Monopsony and employer mis-optimization explains bunching of wages at round numbers”, Mimeo.

Dube, A, J Jacobs, S Naidu and S Suri (2018), “Monopsony in online labor markets”, NBER, Working paper 24416.

Kuhn, P (2004),“Is monopsony the right way to model labor markets? A review of Alan Manning’s Monopsony in Motion”, International Journal of the Economics of Business 11(3): 369–378.

Manning, A (2003), Monopsony in motion: Imperfect competition in labor markets, Princeton University Press.