Economics is unusual among academic disciplines in the emphasis it places on publication in a narrow set of top journals:

- For four decades at least, publishing in The American Economic Review, Econometrica, Journal of Political Economy, Quarterly Journal of Economics, or Review of Economic Studies has been viewed as qualitatively different than publishing in other journals.

Many economists and economics departments view their peers as falling into those who have at least one top-five publication and those that don’t.

- The relative ranking of the five has reshuffled over the years but the AER, JPE, QJE, RES, and ECMA have always been at the head table.

A set of well-established norms such as “at least one top-five publication for tenure” have followed from this concentration. The apparent necessity of publishing in the top-five suggests low substitution possibilities between these journals and others. One well-published blogger summarises this as:

“The economics profession rewards one research paper in a top five journal more than say five good publications in journals outside this narrow set…” (McKenzie 2014).

What does the evidence say?

Despite this general perception, there is little robust empirical evidence to support the quantum superiority of these journals. The view may circular logic: “good economists are the ones who publish in these top-five journals, and these top journals are special because they are where the good economists publish”.

Along with my collaborators, I report results in Economic Inquiry questioning whether publishing in the top-five journals brings rewards that differ distinctly from rewards for publishing elsewhere (Gibson, Anderson and Tressler 2014). We relate salaries of 223 University of California economists to their lifetime research – 5720 articles in 700 different journals. There are unusually detailed public disclosure salary data for the University of California, and it is the largest research-intensive public university system in the US.

Wages rather than citation data

We use labour-market information rather than citation-based measures of journal quality. When faculty debate merits of publication in one journal or another for setting salaries, they are choosing over actions with costly consequences. In contrast, there is almost no cost for authors citing superfluous references, so citations are open to strategic manipulation.

For example, editors may coerce authors into adding citations in order to raise the impact factor of the journal (Wilhite and Fong 2012). Rankings can remove journal self-citations but more subtle patterns may evolve. For example, the journal Technological and Economic Development of Economy (TEDE) rose to third in the economics category of the ISI Journal Citation Reports in 2010 (behind JEL and QJE and ten places ahead of AER). This journal is published on behalf of Vilnius Gediminas Technical University in Lithuania and 60% of citations in 2010 to articles published in TEDE came from five journals published by or for this same university and a further 23% of citations came from journals published by two neighbouring universities.

Even harder to detect is the action of authors preemptively expanding bibliographies and literature reviews in response to the desire of referees to be cited. Spiegel (2012) shows the ‘bibliographical bloat’ that results – over a 30-year period the median number of articles cited by papers in the Journal of Finance almost quadrupled, from 13 in 1980 to 44 in 2010, while the length of Introductions where many of these citations are inserted more than quadrupled. Given these trends in citations, the labour market may be a more discriminating source of evidence on perceived journal quality.

Labour-market evidence

Our research revealed some surprising facts:

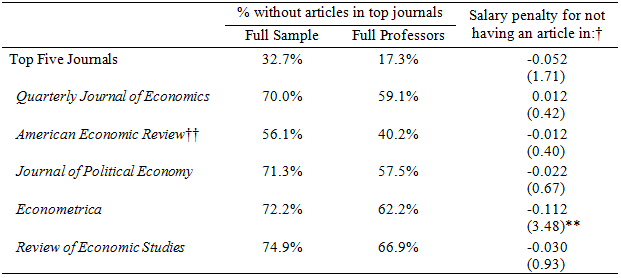

- Many economists at research-intensive universities do not have top-five publications; one third of our sample of University of California economists had not published in any of the top-five journals (Table 1).

- Even among tenured full professors, one in six have never published in the top-five journals; these without-top-five economists are found in eight of the nine University of California economics departments.

Not having an article in a top-five journal evidently is not an impossible barrier to building a career in a prestigious public university system in the US.

- Turning to the effect on salary, conditional on overall research output, there is no statistically significant penalty for economists lacking an article in top-five journals.

The measure of output used here is the number of age-, size-, quality- and coauthor-adjusted pages published over each economist’s career (until the end of 2010). By itself, this measure explains 52% of the variation in log salary, and after adding demographic characteristics and campus fixed effects the earnings equations explain over three-quarters of the variation in log salary.

Table 1. Salary effect of not having a top-five journal in the publication record

Note: Based on 223 economists at the University of California. Robust t-statistics in ( ); * significant at 5%; ** at 1%.

†Estimates of the salary penalty are based on regressions of log of 2010 base salary on overall research output (weighted by journal quality using Combes-Linnemer medium convexity weights, age of the article, page size and number of co-authors), quadratics in seniority and experience, dummies for gender, type of contract, whether holding a named chair, whether a Nobel Prize winner, and fixed effects for each UC campus, with the treatment variable a dummy for whether the academic had not published in the journal or group of journals listed in the first column.

†† The Papers and Proceedings issue of the AER is excluded from these calculations, since it is not a refereed contribution.

- The lack of penalty for not having a top-five journal in the publication portfolio does not depend on which journal ranking scheme is used to aggregate across the different journals that each economist published in.

We use nine rankings from the literature and all give the same pattern. The particular scheme used for the results in Table 1 is the ‘medium convexity’ variant from Combes and Linnemer (2010), which is the one most congruent with the salary data. To show the substitution possibilities entailed by this scheme, the number of articles in lower ranked general interest journals equivalent to one QJE article of the same size is as follows: Economic Journal [1.6], Journal of the European Economic Association (JEEA) [1.8], Scandinavian Journal of Economics [3.3], Canadian Journal of Economics [3.9], Economic Inquiry [4.1].

All of these conversion factors entail much smoother substitution than indicated by the McKenzie (2014) quote above, that one top-five is worth more than five articles in good journals outside the top-five.

- In other words, the Table 1 regressions show that a University of California economist with no articles in top-five journals but with two JEEA articles for every QJE article of another, otherwise similar, economist would not have a significantly lower salary.

Alternatively, someone who produces four Economic Inquiry articles for every QJE of an equivalent colleague would not have a lower salary.

- When each journal is considered separately, the only significant effect that shows up is that, conditional on overall research output, not having an Econometrica article in a portfolio gives a salary penalty of 12%.

None of the other four journals show any significant salary penalty from their absence. Interestingly, Econometrica is the top-five journal that saw a fall in relative impact according to citations (Card and DellaVigna, 2013). The fact that citation and labour-market data imply an opposite ranking shows that an uncritical reliance on citations for ranking journals is unwarranted.

Implications

Finding that publishing in the top-five journals does not bring rewards that differ distinctly from publishing in other journals is clearly of interest to economists developing their career. But this result may have broader significance for at least three reasons.

- First, overstatement of incentives to publish in the top-five may create an inefficient myopia.

Hudson (2013) notes that many issues can benefit from the toolkit and perspective of a good economist, such as the environment and governance, but an exclusive focus on getting into top five journals may deter economists from publishing in journals in these areas.

- Second, geographical bias maybe warping research choices.

Das and Do (2014) show that the probability of publication in top-five journals is much larger for papers on the United States relative to other countries. Economists who think the top-five are more important than they truly are might ignore applied research opportunities in the many countries of the world where the production of research is currently inadequate.

- Third, showing that the top-five are not as special as many economists may think provides reassurance about the concentration of power and the control of the research agenda of the discipline.

Currently about 40% of top-five publications are in The American Economic Review, giving editors of this journal potentially substantial power to shape the future direction of economics as well as the careers of particular economists.

- Finding that the academic labour-market values publication in other journals almost as highly as in the top-five suggests that new directions for theory and evidence might show up in many journals and not necessarily be ignored.

References

Card, D and S DellaVigna (2013), “Nine Facts About Top Journals in Economics”, Journal of Economic Literature 51(1): 144-161.

Combes, P and L Linnemer (2010), "Inferring Missing Citations: A Quantitative Multi-Criteria Ranking of all Journals in Economics", Groupement de Recherche en Economie Quantitative d’Aix Marseille (GREQAM), Document de Travail, no 2010-28.

Das, J and Q-T Do (2014), “US and them: The geography of academic research”, VoxEU.org, 11 February.

Gibson J, D Anderson and J Tressler (2014), “Which Journal Rankings Best Explain Academic Salaries? Evidence from the University of California”, Economic Inquiry.

Hudson, J (2013), “Challenging Times in Academia”, VoxEU.org, 11 November.

McKenzie, D (2014), “Is the Impact Evaluation Production Function O-ring or a Knowledge Hierarchy?”, Development Impact (The World Bank), April 7, 2014.

Spiegel, M (2012), “Reviewing less—Progressing more” Review of Financial Studies, 25(5), 1331-1338.

Wilhite, A and E Fong (2012), “Coercive Citation in Academic Publishing”, Science 335: 542-543.