The COVID-19 pandemic spread by the SARS-CoV-2 virus is currently dominating the physical and economic wellbeing of individuals and societies all over the world.1 We have been producing real-time short-term forecasts on an almost daily basis for confirmed cases and deaths for many parts of the world. Our forecasts2 have been published from 20 March onwards, and have largely been reliable indicators of what can be expected to happen in the next week. The data come from the Johns Hopkins University Center for Systems Science and Engineering data repository,3 with the data for the states of the US courtesy of the New York Times.4

Models based on well-established theoretical understanding and available evidence are crucial to viable policymaking in many disciplines, especially observational-data ones like economics, other social sciences and epidemiology. Nevertheless, in economics there is a long history of relatively simple data-based devices ‘out-forecasting’ formal `structural’ models. The reason why this occurs applies well outside of economics: shifts in the distributions of variables from their past behaviour lead to systematic mis-forecasting in all models in the equilibrium-correction class. That class comprises almost all widely used models, from regressions, scalar and vector autoregressions, through dynamic stochastic general equilibrium models and cointegrated systems, to volatility models like autoregressive conditional heteroskedasticity and its generalised form (GARCH).

Models of pandemics like the present coronavirus outbreak face this problem. Epidemiological models have a sound theoretical basis and a history of useful applications. However, novel viruses can behave in different ways from what models assume (e.g. `recovered’ individuals remaining infectious agents for several weeks), and policy reactions to early predictions of mass deaths can shift distributions suddenly in ways that can be difficult to model formally. Such data are therefore highly non-stationary. Indeed, the methodologies used for reporting the pandemic data are also non-stationary, with stochastic trends, such as the ramping up of infection and antibody testing, and distributional shifts, such as the sudden inclusion of care home cases. Thus, there is a compounding effect as the non-stationarity of the underlying data interacts with the non-stationarity of the reporting process.

Viable forecasting models must be able to handle this quadruple non-stationarity: two forms (stochastic trends and shifts) from two sources (outcomes and measurements thereof). Epidemiological models are driven by their assumptions, which together with the assumed mathematical processes, can limit their usefulness in forecasting as they are not empirical enough. Consequently, an important role in short-term forecasting after distributional shifts remains for adaptive data-based models using a class we call `robust’. These models avoid systematic forecast failure after sudden distributional shifts, although it is important that adaptability remains firmly controlled when forecasting to avoid excess volatility. Importantly, a noticeable drop in outcomes in comparison to the baseline extrapolations from such models can be an indication that implemented policies are having some impact, a topic we return to below.

The forecasts on our website5 are derived from such `robust’ models. Our aim has been to provide short-term forecasts of the numbers of confirmed cases and of deaths attributed to COVID-19 as a guide to planning for the next few days, and so that the next reported numbers would also not always be a surprise. The basis of these reported figures differs across countries and time: some countries just use hospital counts, some add in other locations like care homes, some do much more infection testing whereas others only record cases serious enough to need medical intervention. Occasionally, the basis is switched as happened in China. Thus, our forecasts are of the next reports for the recording system then in use. Robustness after changes in the reporting basis is also an important attribute of our methods: the next forecast will obviously be incorrect, but updating the following forecasts will move them quickly back on track.

The methodology to construct the short-term forecasts involves several steps. First, each observed daily time series is decomposed into a trend and a remainder term. The trend is estimated by taking moving windows of the data and saturating these by linear trends (Doornik 2019). Selection from these trends is made with an econometric machine-learning algorithm (Doornik 2009), and the selected linear trends are then averaged to give the overall flexible trend. A second averaged forecast is also reported and shown graphically, where many forecast paths are averaged over as countries differ substantially in their degree of variation. Next, the trend and remainder terms are forecast separately using the Cardt method and recombined in a final forecast (Castle et al. 2019). Cardt is an improved version of the Card method that we used in the M4 competition, and is an acronym for Calibrated Average of Rho and Delta (an autoregression and a differenced model) (Doornik et al. 2020, Makridakis et al. 2020). Further information about the forecasting device and references can be found in Castle et al. (2020) available on our COVID-19 website.

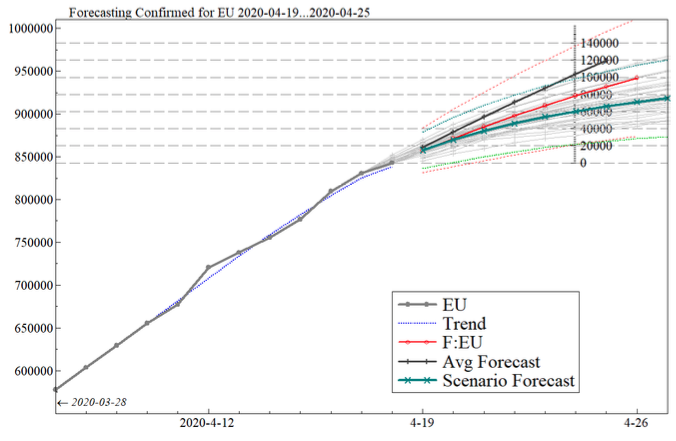

Figure 1 provides an example of a graph for the number of confirmed cases in the EU with a scenario which looked like the following on 2020-04-20 (edited for clarity here). The figure shows in thick grey (with dots) the confirmed cases of COVID-19 as collected by our data sources (based on WHO reports). Next is the estimated trend (blue dotted line) with the forecasts in red (labelled F:EU, shown with 80% confidence intervals as the thin dotted red lines). The black line is the average of forecasts up to three days back (each shown in the thin grey lines). Finally, the teal line marked with crosses is the scenario forecast (with faintly visible forecast intervals in green). The left axis records cumulative cases and the right the numbers since the forecast origin.

Figure 1

Estimates of the peak in daily counts are made from the averaged smoothed trend constructed for cumulative counts while creating the many forecasts. Next, to allow for a genuine slowdown in new cases and deaths, we create four scenarios based on the Chinese experience earlier in the year. Automatic model selection is then used to select the closest scenario mix from a flexible lag length, which causes the initial models to have more variables than observations. These scenario forecasts are introduced when a slowdown is detected in order to have sufficient data on the paths to select the scenarios. We expect the scenario forecasts to become more reliable the further along a country is in recovery. This approach allows us to introduce stylized facts from the Chinese experience. The empirical distribution of daily confirmed counts earlier this year in China was highly skewed, with about two-thirds of the mass beyond the peak. Daily deaths were less skewed, but closer to a straight trend up, followed by a straight trend down.

Our web page also provides some comparisons of our past forecasts with the actual outcomes. For example, Figure 2 shows confirmed cases in the EU for late March/early April. The figure shows in thick grey (with dots) the observed values. The solid red and black lines are the out-of-sample forecasts and average forecasts that were made (for the former with dotted confidence bands). In this case, the forecasts have given good indications of what was about to happen in the next week, although occasionally going up too steeply.

Figure 2

A table on the webpage records information about the peak increase in the estimated trend for deaths, including when it occurred and the number of days elapsed since the peak. A growing number of countries entering that table provides hope that the dramatic economic costs of major lockdowns have been worthwhile, and could start ending.

Authors’ note: Funding from the Robertson Foundation (grant 9907422), Institute for New Economic Thinking (grant 20029822) and European Research Council (grant 694262) are all gratefully acknowledged.

References

Castle, J L, J A Doornik, and D F Hendry (2019), “Some forecasting principles from the M4 competition”, Economics discussion paper 2019- W01, Nuffield College, University of Oxford.

Castle, J L, J A Doornik, and D F Hendry (2020), “Medium-term forecasts of COVID-19: preliminary version”, mimeo, Nuffield College, Oxford.

Doornik, J A (2009), “Autometrics”, in J L Castle and N Shephard (eds), The Methodology and Practice of Econometrics. Oxford: Oxford University Press.

Doornik, J A (2019), “Locally averaged time trend estimation and forecasting”, mimeo, Nuffield College, Oxford.

Doornik, J A, J L Castle, and D F Hendry (2020), “Card forecasts for M4”, International Journal of Forecasting 36: 129–134.

Makridakis, S, E Spiliotis, and V Assimakopoulos (2020), “The M4 competition: 100,000 time series and 61 forecasting methods”, International Journal of Forecasting 36: 54–74.

Endnotes

1 See the latest analysis on Vox here.

2 http://www.doornik.com/COVID-19

3 https://github.com/CSSEGISandData/COVID-19

4 https://www.nytimes.com/interactive/2020/us/coronavirus-us-cases.html

5 http://www.doornik.com/COVID-19

6 The M4 competition was an international competition requiring the forecasting of 100,000 real-life time-series across a range of disciplines and frequencies. Forecasting methods included artificial intelligence, machine learning and statistical methods. See https://mofc.unic.ac.cy/m4/.